Preliminary considerations such as scalability, reliability, adaptability, cost in terms of development time, etc. It does not store any personal data.When building big data pipelines, we need to think on how to ingest the volume, variety, and velocity of data showing up at the gates of what would typically be a Hadoop ecosystem. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. The cookie is used to store the user consent for the cookies in the category "Performance".

This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other.

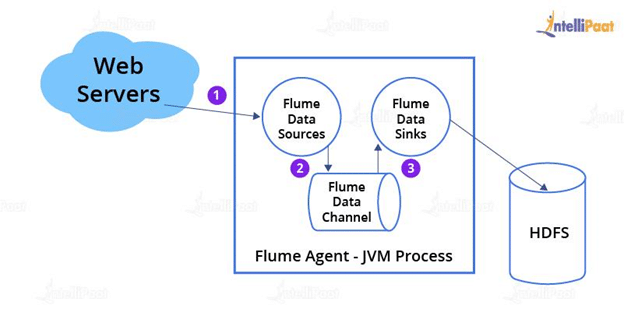

The cookies is used to store the user consent for the cookies in the category "Necessary". The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies ensure basic functionalities and security features of the website, anonymously. Necessary cookies are absolutely essential for the website to function properly. Given this configuration file, we can start Flume as follows: A given configuration file might define several named agents when a given Flume process is launched a flag is passed telling it which named agent to manifest. The configuration file names the various components, then describes their types and configuration parameters. a1 has a source that listens for data on port 44444, a channel that buffers event data in memory, and a sink that logs event data to the console. This configuration defines a single agent named a1. # nf: A single-node Flume configuration # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 The source and sink within the given agent run asynchronously with the events staged in the channel. The sink removes the event from the channel and puts it into an external repository like HDFS (via Flume HDFS sink) or forwards it to the Flume source of the next Flume agent (next hop) in the flow. The file channel is one example – it is backed by the local filesystem. The channel is a passive store that keeps the event until it’s consumed by a Flume sink. A similar flow can be defined using a Thrift Flume Source to receive events from a Thrift Sink or a Flume Thrift Rpc Client or Thrift clients written in any language generated from the Flume thrift protocol.When a Flume source receives an event, it stores it into one or more channels. For example, an Avro Flume source can be used to receive Avro events from Avro clients or other Flume agents in the flow that send events from an Avro sink. The external source sends events to Flume in a format that is recognized by the target Flume source. A Flume source consumes events delivered to it by an external source like a web server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed